[This was originally published in 2009 or so in our now-defunct Spintricity magazine. We recently played this track as a test, after a looong hiatus, and thought about this post.]

I am sure there are several things everybody listens for on their favorite songs. What we are going to do here is actually talk about them.

Radiohead’s “Packt Like Sardines in a Crushd Tin Box”, which really should be called “I’m a Reasonable Man, Get Off My Case”, which is the recurring refrain, starts off with the banging of what sounds like a stick on the edge and side of a steel drum. The drum is very slightly left of center. The strikes vary between hitting the edge, and hitting the side of the drum. The strikes on the side generate subtle secondary vibrations that almost, almost define the shape of the body of the steel drum [or whatever it is. It sounds like our Jamaican steel drum, but when hit on the top and side, and not on the traditional drum-playing surface. The strikes on the top of the steel drum also define the shape of the drum, but much, MUCH more subtlety].

What to listen for here is the different decays of the two different strikes, the secondary vibrations of the drum that define its shape, the solidity of the strikes, and sometimes, I can almost hear the stick not quite hit the drum edge straight on, and it slides a wee little bit against the drum during the strike. Behind the b-bamm-bamm of the steel drum strikes, there is the very faint drumming, on a traditional drum set, in an unrelated rhythmic pattern. I rarely pay that much attention to this background drumming, except to note that it is way, way back in the soundstage.

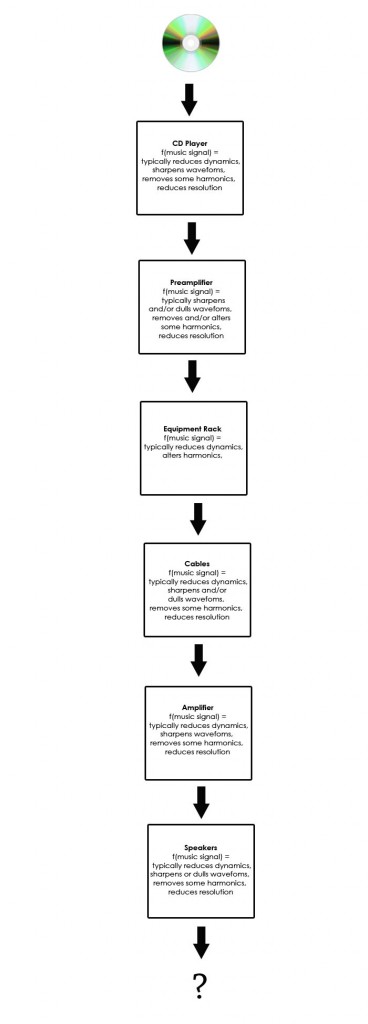

The intro, just described, lasts only a few seconds, and the real intro starts with some aggressive synthesized bass rhythms. Most systems, including those with 2-way speakers, get some satisfaction from this bass track. That doesn’t mean that they play it correctly, just that it sounds darn good anyway and that the bass, although deep, also extends up into the midbass. What I listen for is how tight and authoritative the drum beats are. This requires the entire system to be able to start and stop a note quickly, that is both channels and all components – at the same time. The speakers best able to handle this, in my experience, has been the big Acapella Triolon horns – they would suck all the air out of the room, and then pump it all back in. At least they felt like they were doing that to my chest. The second best, in this regard, was with the Lamm ML3 in our exhibit room at RMAF 2008. In this case, I believe I started to hear little reflections of the bass notes within the notes themselves, i.e. they weren’t all just a simple square wave. At this point I wish I could just stop typing and go listen again to make sure 🙂

Throughout all of this and especially as the song properly starts, there is the idea of the rhythm of the song, in particular the much-maligned idea of PRaT. Not sure why it is maligned, except to think that some joker once said they didn’t think it existed and forum group think did the rest. PRaT with respect to Radiohead, including this song, is an interesting and complicated thing. This is not Steppenwolf’s “Born to Be Wild”. There are many sub-melodies and they have their own beats, which usually are only occasionally related to the main beat of the song itself. But if you listen, you can hear that they too have PRaT, and you can tap your toe to them – but people might think that you are listening to a different song than they are [and you would be!].

The vocals come in at this point, and a few more melodies. Vocals are, as usual here, understated. There is emotion, but it takes a lot of resolution and the ability to handle subtleties like the constriction of a throat after a particular word, and the shape of the mouth after, say, the word ‘case’. Is he pleading or demanding, threatening or entreating? He is not a crooner, belting it out so the whole world knows how he feels. He is just a reasonable man, like [most of] us, and is telling you how it is. Listening to how his lips move, listening to his breathing, and fancy not so ordinary guy stuff like tremulo, helps define the song itself for me. Certainly, a system like one with Audio Note electronics, Jorma Design PRIME cables, and Kharma speakers makes him sound almost actually emotional [?]. Most systems at shows do not have the sufficient resolution and subtlety and he sounds cold and unemotional and distant.

At this time, along with the voice, the keyboards start up a melody. Along with the voice, I also listen to this specifically to hear how much harmonic color there is in each note. Note, by harmonic color I do not mean smearing and over emphasis of particular frequencies to the exclusion of others. What I mean by excellent harmonic color is that each subtone be fully fleshed out and not dominated by, nor dominating, other nearby frequencies and overtones as they actually exist in the music.

For example, and this is still before the 1 minute mark, there is a brassy plucking sound in the left channel that starts up. This should have good rhythm, good brassy color, and good separation, especially from all the other stuff that is going on.

And that is the key to Radiohead, at least from the standpoint of my enjoying it so much, and that is having lots of separation. I can pick and choose, using my ears, any number of very entertaining and interesting melodies to listen to: the drums, the electronic beat, the steel drum, the voice, and the weird sounds. The sounds often get lost on many sound systems.

About this time a recurring constant tone is started. Its blandness and lack of texture can be annoying. It does have SOME texture, but so little that it is very hard to hear.

At about the 1 minute point, the voice picks up some gravelly overtones. Most systems seem to catch at least some of this.

At about the 1:40 point, there is a sound that sounds like a voice played backwards, over and over. Kind of a fffwwwhhhtt fwht fwht sound. [OK, well YOU try and pronounce it ?]

At about the 2:30 point, the electrified voices and breathy sigh is fun. All through this song, more and more things start up in the background. Many of these things sound like voices, and it is all about delicacy, the purity of the tone, and the quickness and the separation: getting the sound to appear and disappear quickly and in isolation from the rest of what is going on.

At the 3 minute mark, lots of weird voices now. Some of them move back and forth between the speakers. How well does the system keep these separate from the song? There is a sound that I call a pig sound [kind of like on Pink Floyd Animals… but not]. How resolute are the subtones in this noise? Can you almost make out words? There are symbols being struck way, way in the back of the soundstage. The electronic beat is still a beating. The voice still has a beat of its own. At this point, most systems just jumble everything together. You can hear that there are noises – but not as something separate and as if it came from a different instrument the rest of the sounds. Does everything still have a separate and distinct well-defined beat? Or is it jumbled so much that all the different beats add up to having no distinct beats at all – and it just sounds like a badly recorded bar band?

The last word of the song, ‘case’, as in ‘get off of my case’, has a long drawn out ssssss. This is often a good test of sibilance.

The song then winds down after 10 seconds or so. This is usually anti-climatic. It is anti-climatic because 1) we are so burnt out by listening intently to everything and trying to identify the plusses and minus of a particular system configuration, and the corollary task of trying to express that in English to each other, and the other task trying to figure out WHY it now sounds the way it does, what cable/part is the most responsible for the current performance level. OR, 2) we are so overwhelmed by the intensity and beauty and power of the sound that we have to wait a little to recover from it all. 2, of course, is the most fun and it still happens on a regular basis, thank goodness.

Originally, when I started using this as a test track, it was just a nicely melodic song with a few things going on in the soundstage that I enjoyed and thought would be mostly acceptable to play at shows. It was later, when I played it at shows, that I learned just how hard it was for most systems to get anything near the right sound in the right places with this song.

It was only later that I listened deeper when using this music, looking for lots of separation and correct attack and decay. This is represented in my head by requiring that the images of the sounds on the soundstage be well-defined – but it is really also a requirement that the elapsed time and harmonic structure of the note be well-defined as well.

And it has been quite recent, since the influx of top-end gear here last year [2008. Editor], that I was realized that it is indeed possible to hear each strand of music, on its own, just like you can when you hear sound in the real world. The surprising thing here is 1) why didn’t we notice that this was so hard to do before? 2) Surprise that this is yet another thing that humans can do without and not whine much about [like B&W versus color TV], 3) what goes into making it easier to hear on this system? 4) We are doomed because we are now addicted.

After this discovery, we notice how we can and cannot do this ‘listen to a single instrument’ thing on other systems and it has especially become a new thing to listen for with this song.

Finally, this is still my primary test song. But… I have started playing various other Radiohead songs as test songs, because I see burn out on the horizon for this song – and I like it too much to let that happen.